Tech Features

WHEN MEDICAL SCANS END UP ONLINE: THE QUIET RISK HOSPITALS CAN FIX FAST

Attributed by Osama Alzoubi, Middle East and Africa VP at Phosphorus Cybersecurity

As Saudi Arabia races ahead in digital healthcare transformation, a quieter vulnerability lingers in the background: medical imaging systems that can be found – and sometimes accessed – directly from the public internet. Imaging infrastructure, diagnostic platforms, and hospital information systems are being modernized at speed improving outcomes, accelerating workflows, and bringing advanced clinical capabilities to more communities. But beneath this progress lies a quieter risk that rarely makes headlines: medical imaging systems being exposed on the public internet due to simple configuration errors.

Not a dramatic cyberattack. Not a threat actor breaching a firewall. Just avoidable misconfigurations that leave sensitive patient data reachable by anyone who knows where to look.

Medical imaging systems in Saudi Arabia face a persistent security challenge that differs from dramatic cyberattacks. Patient data exposure often occurs through configuration errors that leave systems accessible on the public internet. These technical oversights represent a significant vulnerability in healthcare’s digital infrastructure.

The Kingdom’s Personal Data Protection Law (PDPL) establishes strict requirements for handling health data. This legislation, modeled after international standards, mandates enhanced protection for medical information and imposes penalties for unauthorized disclosure. Hospitals must implement organizational and technical measures to prevent data exposure.

Radiology departments increasingly use digital platforms for case discussions and second opinions. Without proper configuration, these systems might allow unintended access to patient records. Teleradiology services, which expanded significantly during the pandemic, require secure transmission protocols to protect data during remote consultations.

When we hear about data breaches, we often imagine skilled hackers penetrating security systems. The reality is often simpler and more preventable. “Exposed” typically means a system is reachable from the public internet due to setup choices, not a sophisticated intrusion.

This happens in real-world healthcare settings for straightforward reasons: rushed deployments to meet clinical deadlines, vendor-supplied default configurations that were never changed, remote support access left open for convenience, and legacy systems that were connected to modern networks without proper security reviews.

The scale is significant. Research has identified over 1.2 million reachable devices and systems globally, including MRI scanners, X-ray systems, and related medical infrastructure. These are not theoretical vulnerabilities. They represent actual systems that can be found and accessed from anywhere with an Internet connection.

What gets exposed is more than images

Medical imaging files are not simply pictures. They carry identifiers and metadata that can connect scans directly to real people. Patient names, dates of birth, identification numbers, and clinical details often travel alongside the diagnostic images themselves.

This matters for several reasons. Beyond the obvious privacy violation, exposed patient imaging data creates risks of identity fraud, potential coercion or blackmail, serious reputational damage to healthcare institutions, and erosion of the trust patients place in their medical providers.

Security monitoring platforms have documented cases where exposed systems allowed direct access to both images and patient data—offering a level of detail that should never be open to anyone outside the clinical team.

Why this keeps repeating worldwide

Hospitals everywhere use similar device types and manage comparable data flows. The result is that the same setup mistakes appear repeatedly across different countries and healthcare systems. What starts as one hospital’s misconfiguration becomes everyone’s common failure mode.

The medical devices themselves often come with similar default settings. Imaging servers, picture archiving systems, and diagnostic viewers are deployed in comparable ways. When basic security steps are skipped during installation, the exposure follows a predictable pattern.

Health sector cybersecurity guidance from international authorities emphasizes the need for repeatable baseline controls precisely because these patterns recur. Reducing exposure requires not innovation, but consistent application of known protective measures.

Healthcare organizations face a common vulnerability pattern. A major healthcare provider addressed similar challenges across hundreds of hospitals, discovering that default passwords, vulnerable firmware, and device misconfigurations created entry points that threatened patient care and hospital operations across more than 500,000 connected medical and operational devices.

The Saudi-specific layer: connectivity at cluster scale

Saudi Arabia’s healthcare transformation includes the expansion of health clusters that connect multiple facilities into integrated networks. This approach improves care coordination and resource sharing, but it also means that one weak link can affect multiple sites.

National interoperability initiatives support the sharing of imaging and diagnostic reports across the healthcare system. The Saudi health ministry has established specifications for imaging data exchange through the national health information exchange platform, enabling providers to access patient scans regardless of where they were originally performed.

This connectivity is essential for modern healthcare delivery. It allows specialists to review scans remotely, supports second opinions, and ensures continuity of care when patients move between facilities. However, it also increases the need for consistent configuration rules and security standards across all connected sites.

When imaging systems within a cluster are not uniformly secured, the exposure risk multiplies. A misconfigured system in one facility can potentially provide access to data from across the entire cluster network.

A practical checklist hospitals can act on

Healthcare institutions can take concrete steps to reduce exposure risk. These are not theoretical recommendations but proven measures that address the most common vulnerabilities.

First, create a complete inventory. Every hospital should maintain a current list of what is connected to its network, including imaging devices, storage servers, viewing stations, web portals, and remote access tools. You cannot protect what you do not know exists.

Second, check external exposure. Verify that nothing sensitive is reachable from the public internet. This requires technical scanning from outside the hospital network to identify systems that respond to external queries. Many organizations discover exposures they did not realize existed.

Third, restrict remote access properly. Remote connections for maintenance and support should be tightly controlled, require strong authentication methods, and be removed entirely when no longer needed. Convenience should never override security when patient data is involved.

Fourth, implement safe setup procedures. Develop standard build guides for imaging systems, change all default passwords and settings, clearly document who owns each system, and establish responsibility for applying security patches and updates. Industry experience shows that default credentials remain one of the lowest barriers for attackers seeking entry into healthcare networks.

Fifth, conduct continuous checks. Exposure scanning should happen after any network changes, not just once annually. Healthcare networks evolve constantly, and new vulnerabilities can appear whenever systems are added or reconfigured.

These steps align with guidance from international cybersecurity authorities and health sector regulators, which emphasize reducing exposed services and strengthening baseline controls as priority actions for healthcare organizations.

The governance fix: make secure setup part of how clusters run

Individual hospital efforts are necessary but not sufficient. At the cluster level, governance structures must embed security into standard operations.

This begins with cluster-wide minimum standards for imaging systems and remote access. Every facility within a cluster should follow the same baseline security requirements, ensuring consistent protection regardless of which site a patient visits.

Clear ownership must be established for every system. Someone specific should be responsible for applying patches, approving access requests, and regularly checking for exposure. When accountability is diffuse, critical tasks get overlooked.

Procurement processes offer another leverage point. Purchase agreements should require vendors to provide secure default configurations, enable comprehensive logging capabilities, and commit to supported update cycles for the life of the equipment. Security should be a selection criterion, not an afterthought.

These governance approaches reflect sector framework guidance that encourages structured programs and repeatable controls rather than ad hoc responses to individual incidents.

Saudi Arabia has invested heavily in national cybersecurity frameworks and regulatory oversight across critical sectors, including healthcare. The foundation exists. The next step is ensuring those protections extend fully to the expanding ecosystem of IoT and IoMT devices — where simple configuration gaps can undermine otherwise sophisticated digital progress.

Prevent avoidable incidents

The goal is not perfection. Healthcare systems are complex, and some level of risk will always exist. The goal is removing the easiest path for data exposure: systems sitting openly on the public internet waiting to be found.

In connected healthcare, the quickest wins come from two simple principles: visibility and access control. Know what you have connected, and shut the doors that do not need to be open.

For Saudi Arabia’s health clusters, this represents an achievable objective. The infrastructure investments being made across the Kingdom’s healthcare sector create an opportunity to build security into expansion rather than retrofitting it later.

Medical imaging systems serve an essential clinical purpose. They should not also serve as unintended windows into patient data. With practical steps and consistent governance, hospitals can fix this quiet risk before it becomes a public incident.

In digital healthcare, exposure is rarely a mystery. It is usually a configuration. The question is not whether hospitals can fix it, but whether they will do so before patients pay the price.

Tech Features

How the Middle East Moved Beyond Followers to Build Brands

Yet another compelling new piece by Mariam Abouzeid, Marketing Manager, MEA at Nothing Technology

There is a $771 million evolution happening at the center of the Middle East marketing industry. For the past five years, the global narrative around influencer marketing was built on a flawed premise that reach equals influence. Brands in New York and London debated whether the creator economy was a bubble, while marketers obsessed over vanity metrics and fleeting viral moments. In the GCC, we stopped debating and started building. The influencer marketing market in the GCC is valued at $315.5 million in 2025 and is projected to reach $771.6 million by 2032. But the real story is not the money. It is the maturity.

Having overseen communications strategies that collectively generated billions of impressions across the region, I have watched Dubai and Riyadh transform from emerging markets into the global vanguard of creator led brand building.

The signals are clear. The Middle East is not catching up to the global influencer economy. We are leading it. We are doing it by fundamentally reprioritizing how creators are used, moving them out of the traditional PR umbrella and embedding them as the ultimate engine for mass awareness and deep brand trust. When you look at brands like Huda Beauty, which generates over $75 million a year through the strategic amplification of creator content, you see the blueprint for the future. Huda Kattan built a billion dollar empire right here in Dubai not by treating influencers as a PR add on, but by embedding them into the core architecture of the brand. This creator first model has paved the way for a new generation of Middle East beauty empires, from Youmna Khoury’s Youmi Beauty to Aliona Shcherba’s Aliona Cosmetics, proving that the region is no longer just consuming global beauty trends. It is exporting them.

The Mass Awareness Machine

Before we examine where the Middle East is going, it is worth understanding the foundation it has built. Influencers are the most powerful mass awareness engine ever created. In a region where the GCC is on track to have 263,000 active influencers in 2025, brands have access to a decentralized media network that no television buy or billboard campaign can replicate. When 60 percent of Saudi users and 48.1 percent of UAE users use social networks as their primary tool for researching brands and products, creators are not supplementing the media plan. They are the media plan. According to EMARKETER, US social network amplified content ad spending is projected to match creator sponsored content revenues at $14.15 billion in 2027 before surpassing them in 2028. Brands are about to spend more money boosting creator content than they pay

creators to make it. In the UAE and Saudi Arabia, this strategy is already taking hold. Ounass, the Middle East premier luxury e-commerce platform, provides a perfect example of this evolution. They do not just pay influencers for one off posts. They use data driven insights to identify top performing creators, then amplify that content through targeted performance marketing, blending emotional storytelling with rational product attributes to build a luxury narrative that resonates deeply with Gulf consumers and drives measurable return on ad spend/ But here is where the Middle East diverges from the global playbook. While Western brands are still treating influencers purely as awareness tools, the GCC has moved further up the value chain.

The QSR Reality Check: Awareness vs Consideration

To understand this shift, look no further than the highly competitive food and dining sector in the Middle East. This is a category where influencer marketing has been deployed more aggressively than almost any other. At the mass market end, brands like Americana operating KFC and Pizza Hut, McDonald’s, Papa Johns, and Subway pour millions into influencer campaigns to stay top of mind. Yet AlBaik, the beloved Saudi homegrown champion, topped YouGov KSA QSR Rankings 2026 with a consideration score exceeding 50 percent, a position built on decades of genuine consumer love, not just influencer hype. Global giants McDonald’s and KFC follow at 26.9 percent and 23.2 percent consideration respectively, despite their enormous social media presence.

At the premium end, the contrast is even sharper. Shake Shack, Five Guys, P.F. Chang’s, Joe & The Juice, and homegrown hero SALT have all built their GCC presence on the back of creator driven content, using beautiful food photography, viral reels, and influencer queues around the block. Nobu and Zuma in Dubai have become synonymous with aspirational lifestyle content, their dining rooms perpetually filled with creators documenting every dish.

Consider the rise of % Arabica. The Kyoto born coffee brand has grown into a $1.3 billion global giant with virtually zero traditional marketing. In the UAE, its minimalist, highly aesthetic stores were designed specifically for the Instagram and TikTok era. The brand relies entirely on organic discovery, user generated content, and influencer footfall to drive its massive queues. It is the ultimate example of a brand built entirely on the back of social media awareness and creator aesthetics .

The stories of FIX Dessert Chocolatier and Bi Laban are perhaps the most instructive. FIX Can’t Get Knafeh of It chocolate bar became a global social media phenomenon in 2024 and 2025, generating a staggering 1,259 percent year over year explosion in social conversations. The viral awareness was undeniable, leading to $22 million in sales at

Dubai Duty Free in the first quarter of 2025 alone 10 . But as the Ehrenberg Bass Institute for Marketing Science noted, the viral fad diluted the brand identity, turning a specific product into a generic design brief copied by everyone 11 . Similarly, Bi Laban became a regional sensation engineered through influencer seeding and relentless creator buzz. The queues were real. But when the hype faded, the business fundamentals were exposed. Viral awareness, it turned out, is not a substitute for operational excellence, quality consistency, and genuine consumer loyalty.

The data reveals a stark reality. Hype does not seamlessly translate into habit. While 53 percent of Saudi residents eat fast food weekly, their ultimate choice of where to dine is driven by cleanliness at 48 percent and price at 46 percent, operational realities that no influencer can fake 12 . Influencers drive the initial discovery, cited by 61 percent of consumers as their source for finding new spots, but they are highly inefficient at closing the sale 12 .

The Cost of Misalignment: When Influence Breaks Brands

If the Middle East is learning how to build brands through creators, the global market has provided the ultimate cautionary tales of what happens when influence is misaligned with brand equity. The collapse of the Adidas and Yeezy partnership remains the most expensive influencer marketing failure in history. Adidas tied its cultural relevance to a single, highly volatile creator. When the relationship imploded, Adidas posted its first annual loss in 30 years, warning of a $1.3 billion revenue hit due to unsold inventory 13 . The lesson for regional brands is clear. Renting cultural relevance from a creator without building your own brand equity is a catastrophic financial risk.

Similarly, Pepsi infamous Kendall Jenner campaign remains the textbook example of scripted authenticity failing spectacularly 14 . Pepsi paid a massive premium for Jenner reach, assuming her follower count would automatically translate into cultural resonance. Instead, the tone deaf execution sparked a global backlash, proving that massive awareness without genuine cultural alignment actively damages brand trust. These global failures have taught Middle East marketers a crucial lesson. Awareness without alignment is dangerous. Influence must be anchored in trust, not just reach.

The Beauty Blueprint: From Awareness to Empire

If the F&B sector illustrates the limits of viral conversion, the beauty and luxury sectors provide the blueprint for the great reprioritization. Huda Kattan built Huda Beauty into a billion dollar empire using this exact logic. She did not treat influencers as a direct sales channel. She treated them as a massive awareness engine. Today, Huda Beauty generates over $75 million a year through paid media amplification of creator content. The brand understood early that organic influencer

posts build top of funnel awareness, but it is the paid amplification of that content that drives actual scale.

Similarly, Mona Kattan fragrance brand Kayali has mastered this shift. Kayali does not rely on influencers to push promo codes. It uses them to build cultural relevance and awareness around scent layering. The result? According to Sephora merchant partners, Kayali now has one of the highest repurchase rates in the entire fragrance category globally 15 . The brand uses influencers to get the consumer attention, but relies on product quality and brand equity to secure the conversion and the repeat purchase.

This blueprint is now being replicated by the most powerful creators in the GCC. Kuwaiti influencer Noha Nabil leveraged her massive regional following to launch Noha Nabil Beauty, building a brand deeply rooted in Arab culture and diversity that earned her a spot on the Forbes Women Behind Middle Eastern Brands list 16 . Similarly, Emirati superstar Balqees Fathi transformed her 13 million Instagram followers into a luxury cosmetics empire with Bex Beauty, merging global innovation with specific GCC beauty ideals 17 .

These founders understand that influence is the spark, but operational excellence and cultural alignment are the engine.

The Trust Capital of the World

This is why the Middle East is winning. Brands here have realized that influencers are not a shortcut to conversion. They are the architects of trust. According to the 2026 Edelman

Trust Barometer, global trust is contracting inward. People are retreating into insular, values aligned circles, making it harder than ever for mass corporate messaging to penetrate 18 . Yet, the UAE topped the 2026 Edelman Trust Index globally with a score of 80 out of 100, up eight points from the previous year 19 .

Why? Because brands in the UAE and Saudi Arabia understood early that trust cannot be broadcast. It must be brokered. As Edelman research highlights, in an insular world, trust is built and scaled by creators who act as cultural mediators 18 .

This is backed by new academic research. A 2026 study from Imperial College Business School on influencer authenticity found that the era of renting credibility through one off posts is over 20 . Professor Omar Merlo research proves that when brands treat influencers as long term partners rather than transactional media channels, they move from a transactional to a transformational relationship with consumers 20 .

The Global Validation: Unilever Pivot

The model pioneered in the Middle East is now being adopted by the world largest advertisers. In early 2026, Unilever made a declaration that validated everything regional marketers have been building. The FMCG giant shifted 50 percent of its total digital advertising budget away from traditional corporate ads and directly into social media and creators 21 . By April 2026, that commitment had translated into a network of 300,000 influencers actively promoting Unilever brands globally 22 .

Unilever CMO Leandro Barreto described the strategy as building Desire at Scale, using creators to embed brands authentically in culture 22 . This is exactly what the Middle East has been doing for years. When a global giant like Unilever restructures its entire marketing apparatus to match the creator first model, it proves that influencer marketing has officially graduated from the PR department to become the central nervous system of modern brand building.

The Academic Consensus on Brand Value

The data is clear, and the academic consensus is catching up to what we already know in the GCC. A recent Harvard Business Review study on how brand associations drive customer spending found that what consumers spontaneously think about a brand matters far more than what they agree with on a rating scale 23 . The research proves that brand equity is built through deep, authentic associations over time.

Furthermore, as McKinsey 2026 State of Marketing report highlights, branding has returned as the number one priority for marketing leaders globally 24 . CMOs view branding ability to drive distinctiveness and embody a clear value proposition as critical to building competitive differentiation 24 . In the Middle East, we know that the fastest, most authentic way to build that distinctiveness is through the voices of trusted creators.

The Way Forward: Leading the Next Era

The next wave of global marketing innovation will not come from Silicon Valley or Madison Avenue. It is coming from Dubai and Riyadh. According to EMARKETER, 57 percent of ad buyers globally say influencer ads and partnerships are their top investment priority for 2026. The world is finally waking up to the power of the creator economy, but the Middle

East is already living in its future.

We have moved past the vanity metrics. We have moved past the debate over whether influencers belong in PR or paid media. We have built an ecosystem where creators are the undisputed architects of mass awareness, brand trust, and deep consideration.

The Middle East audience is among the most digitally connected and brand aware anywhere in the world, and it expects marketing strategies that reflect that level of sophistication. Influencer marketing is not just growing here. It is setting the global standard. The brands that recognise this will not just win the region. They will lead the world.

Tech Features

NEW UAE ADVISORY FIRM AETHRA TARGETS GAPS IN GLOBAL HIRING AND MOBILITY STRATEGY

Aethra Advisory, a global hiring strategy & mobility architecture practice, has launched in the UAE as the first independent advisory practice dedicated to helping organisations design their global hiring infrastructure. The business will support founders, HR leaders, scale-up operators, and strategic decision-makers across UAE companies expanding internationally and global businesses entering the UAE, and wider Middle East, helping them navigate cross-border hiring, employment models, mobility programs, and compliance risk in an increasingly global workforce environment.

Aethra Advisory enters the market at a time when more companies are hiring across borders before they have built the systems needed to support those decisions. Many organisations still select Employer of Record (EOR) platforms, vendors, visa routes, or employment structures based on speed, only discovering compliance gaps, cost leakage, or operational limitations months later. Aethra sits at the architecture stage, helping leaders make structural decisions before vendors and execution routes are selected. As the global cross-border workforce and migration solutions market is projected to reach $11.37 billion by 2033, growing at an annual rate of 11.8%, this advisory layer is becoming important for companies that need global workforce models built for scale rather than short-term hiring fixes.

The company works upstream of execution, helping companies define where to hire, how to hire, which infrastructure to use, and where risk may emerge. Its services include Global Hiring Blueprint, Mobility Program Design, and Founder Advisory, covering areas such as EOR versus entity decisions, country decision matrices, immigration pathway design, vendor ecosystem strategy, compliance architecture, mobility policies, and 12-month hiring roadmaps.The practice is self-funded, allowing Aethra to provide independent guidance on employee relocation and global mobility cases for UAE-based and international firms.

Sonam Haider, Founder and Global Mobility Strategist, Aethra Advisory, said: “Companies often treat global hiring as a vendor selection exercise, when the real issue is whether the structure can hold under scale. The UAE currently holds the highest hiring sentiment globally, with 56% of employers planning workforce expansion, but growth at this pace can expose weak EOR models, unclear worker classification, poor market entry choices, and fragmented mobility processes. The gaps usually only surface more than a year later. Aethra Advisory gives leaders an independent view before those decisions become difficult and expensive to reverse.”

The business is founded on more than a decade of operator-side experience across global mobility, consulting, in-house leadership, and global employment platforms. The founder has held roles at PwC, Fragomen, Amazon, Uber, Deel, and Multiplier, giving Aethra an inside-the-machine perspective on how global hiring decisions play out. The company is designed for organisations that are expanding into new markets for the first time, building mobility programs that have outgrown their current infrastructure, or managing global hiring across multiple countries without a clear operating model.

Aethra’s framework is built around five pillars: workforce strategy, hiring infrastructure, immigration pathway design, compliance architecture, and mobility operations. This approach helps companies move from fragmented decision-making to a hiring architecture they can own, adapt, and execute against. The company is already seeing early market validation through founder-level conversations with EOR platform leadership and potential strategic partners across the region.

As the UAE continues to grow from a destination market into a global workforce hub, employee relocation and cross-border mobility requirements will continue to increase across both inbound talent and UAE-based organisations managing global hires. Aethra Advisory aims to support this shift by becoming the strategic advisory layer for global hiring and mobility architecture, helping organisations build workforce structures that are scalable, compliant, and aligned with long-term growth.

Tech Features

Why AI Transformation is a Human Imperative, and the Role the CHRO Must Play

A year after IBM’s Deep Blue defeated Garry Kasparov in 1997, Kasparov did something unexpected. Rather than retreat, he invented a new form of chess he called ‘advanced chess’, pairing human players with computers to see what they could produce together. The result was remarkable. Even moderately skilled players, armed with a standard machine, were capable of defeating both grandmasters playing alone and computers operating without human input. The combination was categorically superior to either element in isolation.

By: David Henderson , Group CHRO, Al-Futtaim

That experiment carries an important lesson for organisations navigating AI today. The instinct understandable, but mistaken, is to frame AI as a technology story. It is not. AI reshapes jobs, redistributes decision rights, resets operating models, and forces us to reconsider deeply embedded ways of working. It intersects directly with creativity, cognition, confidence, identity and employability. It produces as many human questions as it does technical ones.

This is why the organizations that are genuinely converting AI from experiment into competitive advantage are those that have understood it, first and foremost, as a large-scale human transformation, one that demands the business, the CHRO and the CIO working as genuine partners, each bringing what the other cannot.

The organisations winning with AI are not those with the most sophisticated technology. They are those that have most deliberately redesigned how humans and machines work together.

The Case for the CHRO

The most effective AI transformations are driven by a tight three-way partnership:

the business setting the agenda and owning outcomes,

the CIO providing the technology platforms,

data infrastructure and governance,

and the CHRO leading the human transformation that determines whether AI delivers value at scale or stalls in pilots.

Each is essential. None is sufficient alone.

What has changed is the recognition that the human dimension, the design of work and decision rights, the building of workforce capability, the management of trust and ethics, the orchestration of adoption across large and diverse employee populations, is not downstream of the technology. It is a primary enabler of it. That is the CHRO’s territory, and it demands the same strategic weight as the technology agenda itself.

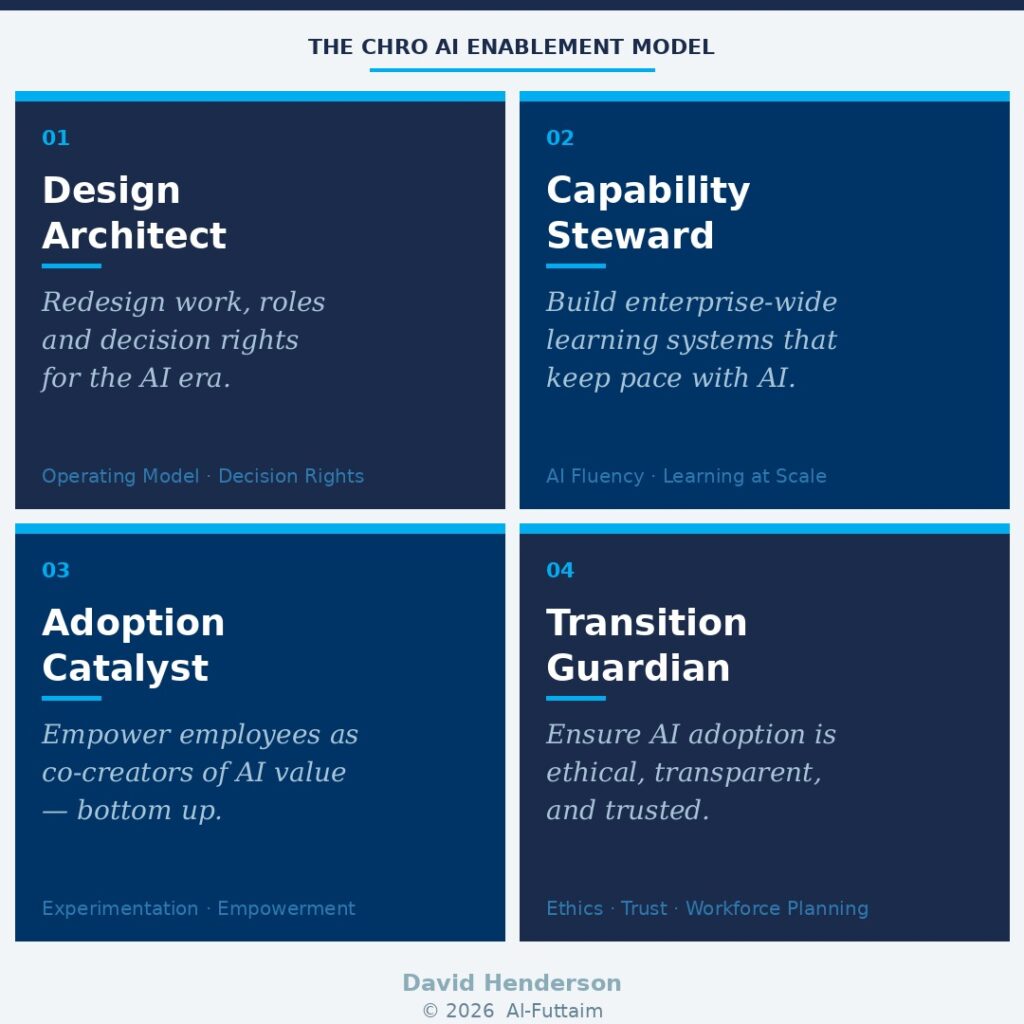

In this paper, I propose a model for how CHROs can lead AI enablement through four interconnected roles: Design Architect, Capability Steward, Adoption Catalyst, and Transition Guardian. Each role addresses a distinct dimension of the human transformation that AI demands. Together, they represent a holistic operating mandate for CHROs who are serious about delivering sustained enterprise value from AI, not just deploying tools.

01) Design Architect: Redesigning work, roles and decision rights for the AI era

AI transformation fails far more often because of organisational design choices than because of technology limitations. When companies deploy AI tools without redesigning how work is done, decision rights blur, accountability erodes, adoption stalls, and productivity gains remain trapped in pilots. The technology is rarely the binding constraint. The organisation almost always is.

The CHRO’s role as Design Architect is to get ahead of that problem. This means providing overarching direction on how work should be redesigned so that human judgment and AI-generated insight are deliberately combined, not accidentally layered on top of each other. It means clarifying which decisions remain human-led, which are AI-supported, and where accountability ultimately sits. And it means building an operating model architecture that is dynamic enough to evolve as AI capabilities continue to develop rapidly.

In my own experience, incrementalism in this domain is almost always destined to fail. The organisations that are getting this right are making bold, decisive design choices, and in some cases, breaking up parts of the organisation that have long been treated as untouchable.

| In Practice — Procter & Gamble P&G redesigned decision models across forecasting, procurement and product innovation so that AI produces insights and options while humans retain final say on portfolio bets, supplier strategy and innovation priorities. Critically, AI was embedded directly into logistics decision forums — rather than remaining siloed in group-level analytics teams, removing information-sharing barriers and enabling real-time decision-making at scale. |

In Practice — Microsoft Microsoft intentionally redesigned all knowledge-work roles so that AI copilots handle drafting, synthesis and retrieval, while employees retain judgment, prioritisation and accountability. The result was not simply cost reduction,it was the redeployment of released cognitive capacity into revenue-generating innovation and customer experience improvement. |

Being intentional on organisational design means staying one step ahead of technological adoption, not one step behind it. The CHRO must proactively reimagine how AI reshapes the value chain and translate that vision into operating model decisions — rather than reactively course-correcting after tools have already been deployed.

02) Capability Steward: Building enterprise-wide, continuous learning systems that keep pace with AI

In the AI era, capability, not technology, is the primary constraint on value creation. The organisations that are scaling AI effectively are not those with the most sophisticated tools. They are those whose people know how to use them confidently, critically, and productively in the context of real work.

The CHRO’s role as Capability Steward is to build the learning infrastructure that makes this possible at scale. This means moving decisively away from episodic, one-size-fits-all training models, which are structurally unsuited to the pace of AI change, towards continuous, contextual learning systems that are embedded in daily workflows.

It means developing AI fluency across the workforce, not just in specialist teams. And it means maintaining ongoing insight into which capabilities are emerging, shifting or declining as the skills economy evolves.

| In Practice — Amazon Amazon treats AI capability as core workforce infrastructure rather than a specialist skill. It has built role-specific learning pathways combining foundational AI fluency with immediate, in-role application, particularly in operations, logistics and corporate functions. The result has been faster adoption of AI tools across large frontline and corporate populations, with measurable productivity gains driven by applied capability rather than isolated expertise. |

| From My Experience — Zurich Insurance During my time at Zurich, we built an enterprise-wide AI and digital capability ecosystem that combined broad AI literacy with deep domain-specific learning for underwriters, claims handlers and risk professionals. Learning was continuous and embedded in daily workflows. Critically, we also focused on transferable skill identification, enabling us, for example, to rapidly retrain and redeploy claims handlers as customer service agents based on strong overlaps in their underlying skill profiles. That flexibility became a genuine competitive asset. |

The CHRO must protect long-term capability health and resilience, not simply optimise for short-term productivity. Organisations that treat AI learning as a one-time training event will struggle to sustain adoption. Those that build continuous learning as an organisational capability will compound their advantage over time.

03) Adoption Catalyst: Empowering employees as co-creators of AI value, not passive recipients of it

Many CHROs of my generation were trained in a change management orthodoxy that starts at the top of the house, guiding coalition, executive sponsorship, structured project timelines. That model is not wrong, but it is increasingly insufficient for AI.

Top-down governance and strategy remain essential. But scalable AI value does not come from mandates. It comes from the bottom up, from employees who understand the work and are empowered to apply AI where insight is deepest and value most immediate.

The CHRO’s role as Adoption Catalyst is to create the conditions for this to happen: building cultures of experimentation and knowledge-sharing, aligning incentives and recognition to reward participation, and enabling employees to co-create AI use cases rather than simply receive them.

This is a fundamental shift from change management to what I would call change orchestration, leaders creating the environment in which adoption flourishes, rather than driving it through compliance.

| In Practice — Al-Futtaim Blue Loyalty Platform The clearest proof point I can offer comes from our own experience at Al-Futtaim. The group’s Blue Loyalty Platform uses AI to combine behavioural, transactional and partner data to deliver personalised offers and purchase recommendations across our retail and service channels. What made this work was not central design — it was that the use cases were developed by multi-disciplinary frontline retail employees, working in agile action-learning teams, applying their direct customer insight to build the recommendations. AI was embedded into frontline and digital workflows by the people who understood those workflows best. The result has been measurable revenue uplift driven by use cases rooted in real customer interactions — not boardroom hypotheses. |

| In Practice — Google Google runs AI adoption through a culture of experimentation supported by internal communities, shared tooling and lightweight governance. Employees apply AI to improve workflows, products and services; successful use cases are productised and scaled through internal platforms. This produces rapid diffusion of best practices, strong employee ownership, and continuous improvement generated by those doing the work. |

Employees need to define the tools they need , not simply learn the tools they are given. That distinction is everything when it comes to whether AI adoption takes root or stalls.

Bottom-up adoption is not a cultural nicety. It is the mechanism through which AI becomes embedded, differentiated and commercially meaningful at scale. Organisations that get this right do not deploy AI. They make AI part of how the organisation thinks.

04) Transition Guardian: Ensuring AI adoption is ethical, transparent, and in the long-term interest of employees

AI introduces legitimate concerns that the CHRO cannot afford to minimise: fairness, transparency, surveillance, bias, job security, long-term employability. If these concerns are not addressed proactively and honestly, trust erodes, and without trust, adoption stalls regardless of how good the technology is.

The CHRO’s role as Transition Guardian is to ensure that AI adoption is consistent with organisational values and strengthens, rather than undermines, the employee value proposition.

This means embedding ethical guardrails and human oversight into AI adoption from the outset, not retrofitting them under regulatory pressure. It means communicating honestly with employees about what AI will change, what it will not change, and what pathways exist for reskilling and redeployment.

And it means treating strategic workforce planning not as an HR administrative function, but as a core enabler of organisational resilience.

Today’s employees need to focus less on specific target jobs and more on building transferable skill profiles that will serve them across a career that is certain to be turbulent. They need to feel that their organisation has their back. The CHRO must make that commitment credible, not through reassurance, but through concrete pathways.

| In Practice — Salesforce Salesforce has embedded ethical and responsible AI as a prerequisite for scale rather than a control imposed after deployment. The company requires mandatory Responsible AI training, applies humanin-the-loop oversight for AI-enabled decisions, and maintains clear disclosure standards when AI influences employee or customer outcomes. The trust this generates has driven faster adoption, stronger employee engagement, and meaningfully reduced legal, regulatory and reputational risk. |

| In Practice — Unilever Unilever explicitly links AI adoption to employability and internal mobility. As AI reshapes roles, the company invests heavily in reskilling and redeployment pathways, reframing AI as augmentation rather than displacement. Workforce planning, learning and ethics are intentionally connected rather than siloed , and employees can see a credible future for themselves within the transformation. |

Trust is not a soft outcome of AI transformation. It is the hard prerequisite for scaling it. The CHRO who treats it as such will find that ethical, transparent AI adoption does not slow the transformation down — it is the thing that makes it durable.

The CHRO Skill set for AI Enablement

Having defined the four roles the CHRO must play, it is worth being specific about the skills and attributes required to execute each one. In an environment where AI success is increasingly determined by organisational design, capability building, adoption dynamics and trust, not technology, these capabilities define whether the CHRO is shaping the transformation or reacting to it.

| Design Architect | Capability Steward | Adoption Catalyst | Transition Guardian |

| Operating Model Design | Learning at Scale | Change Orchestration | Ethical Judgement |

| Work & Role Deconstruction | AI Fluency Translation | Employee Empowerment Mindset | Trust Stewardship |

| Decision Rights Clarity | Skills Architecture & Workforce Sensing | Incentive & Recognition Design | Strategic Workforce Planning |

| Systems Thinking | Action Learning Systems | Business Experimentation Literacy | Risk Anticipation |

| Enterprise Co-Creation | Future Capability Stewardship | Cultural Signal Awareness | Clear, Honest Communication |

A few points of emphasis.

As Design Architect, the most underrated skill is enterprise co-creation — the confidence and credibility to act as a genuine co-owner of AI strategy with the CIO and business leaders, not merely as a supporting function.

As Capability Steward, future capability stewardship is distinct from short-term productivity optimization; CHROs must protect long-term organisational resilience, not just near-term performance.

As Adoption Catalyst, cultural signal awareness is often more powerful than formal programmes, leadership language and behaviour either accelerate or silently undermine adoption at scale. And as Transition Guardian, clear and honest communication, including on uncertainty and difficult tradeoffs, is the foundation on which all of the other skills rest.

Without it, none of the others land.

Conclusion: The Human Transformation Imperative

Organisations that are genuinely winning with AI are not those with the most sophisticated technology stacks. They are those that have most deliberately and thoughtfully redesigned how humans and machines work together, rethinking operating models, building capability at scale, empowering employees as co-creators, and managing the transition with ethics and transparency.

The CHRO who grasps this, who acts as Design Architect, Capability Steward, Adoption Catalyst and Transition Guardian simultaneously, becomes one of the most important executives in the organisation. Not because HR has staked a claim to a technology agenda, but because the most important levers for AI value creation are organisational and human, and those are precisely the levers that CHROs are equipped to pull.

Kasparov’s advanced chess experiment showed us, a quarter of a century ago, that the most powerful outcomes emerge not from humans or machines working alone, but from their deliberate, skillful combination. The CHRO’s mandate is to make that combination work, at enterprise scale, at pace, and without losing the trust of the people it depends on.

That is not a supporting role. It is a defining one.

_______________________________________________________

David Henderson is Group CHRO of Al-Futtaim Group, one of the Middle East's largest diversified conglomerates. He has previously served as CHRO of Zurich Insurance Group, MetLife and PepsiCo.

-

News11 years ago

SENDQUICK (TALARIAX) INTRODUCES SQOOPE – THE BREAKTHROUGH IN MOBILE MESSAGING

-

Tech News2 years ago

Tech News2 years agoDenodo Bolsters Executive Team by Hiring Christophe Culine as its Chief Revenue Officer

-

Trending7 months ago

Trending7 months agoOPPO A6 Pro 5G Review: Reliable Daily Driver

-

VAR1 year ago

VAR1 year agoMicrosoft Launches New Surface Copilot+ PCs for Business

-

Tech Interviews2 years ago

Navigating the Cybersecurity Landscape in Hybrid Work Environments

-

Automotive2 years ago

Automotive2 years agoAGMC Launches the RIDDARA RD6 High Performance Fully Electric 4×4 Pickup

-

Tech News10 months ago

Tech News10 months agoNothing Launches flagship Nothing Phone (3) and Headphone (1) in theme with the Iconic Museum of the Future in Dubai

-

VAR2 years ago

VAR2 years agoSamsung Galaxy Z Fold6 vs Google Pixel 9 Pro Fold: Clash Of The Folding Phenoms