Cover Story

Powering the Future of Data Centers with AI-Driven Energy and Cooling Solutions

Stephen Liang, Chief Technology Officer, and EVP Infrastructure & Solutions, Vertiv

With the rapid expansion of data center investments across the Middle East, fuelled by the region’s abundant and affordable power resources, Technology Integrator spoke with Stephen Liang, Vertiv’s CTO, to explore the company’s latest innovations in cooling and power solutions. These advancements are designed to meet the demands of high-density data centers while prioritizing energy efficiency, positioning Vertiv at the forefront of data center innovation and infrastructure development in the region.

He also delved into Vertiv’s strategic collaborations with industry giants like NVIDIA and other leading chipmakers, which are instrumental in ensuring data centers are future-ready for the growing demands of AI applications. Liang emphasized how Vertiv’s global R&D network drives innovation, enabling the company to develop tailored solutions that address the unique needs of Middle Eastern markets.

As the Chief Technology Officer, could you share insights into how you’re utilizing AI within your organization?

AI is both a dynamic frontier and an evolution of established methodologies. In many respects, it’s a modern enhancement of time-tested techniques. For instance, we’ve long used large-scale data models for data mining and predictive maintenance and leveraged sophisticated machine learning algorithms to support our design processes and simulations, especially for analyzing power and cooling flows. Today, AI amplifies these capabilities, accelerating the precision, speed, and depth of learning and analysis. Looking forward, we’re pushing the envelope in assisted programming and design, exploring AI’s potential to shape Vertiv’s future innovations.

At its core, AI unifies a suite of advanced technologies under one framework, driven by high-performance computing that enables significantly faster, more complex task execution. Where we once relied on human productivity alone, we now have computers driving transformations at an unprecedented scale, redefining workflows and drastically shortening innovation cycles. While human capabilities eventually plateau, AI empowers our teams to reach new productivity heights, creating products and solutions that closely align with customer needs. This is AI’s transformational power in action.

How is Vertiv leveraging its presence in the Middle East and its partnerships with hyperscalers and chip manufacturers to address the growing demands on data centers driven by AI advancements?

When engaging with data center operators worldwide, one of their most pressing challenges is ensuring uninterrupted and reliable power. The Middle East, with its robust, cost-efficient power sources, has become a strategic hub for data center growth, particularly those focused on AI applications. Vertiv is well-positioned to support this expansion, with deep roots in the region, including a state-of-the-art production facility in Ras Al-Khaimah, and longstanding regional partnerships that enable us to support virtually any data center project.

Additionally, our strategic collaborations with hyperscalers and major chipmakers such as NVIDIA place us at the forefront of data center evolution. As AI technology advances and the demand for data centers intensifies, we’re helping client’s infrastructures be future-ready. Today, data center planning requires a proactive, visionary approach; after years of plateauing demand, AI has reignited the industry, driving a resurgence in infrastructure innovation.

By collaborating with leading technology providers and data center operators, Vertiv ensures rapid deployment of cutting-edge technologies with resilient, scalable infrastructure. We’re laying the foundation for the next wave of AI-driven advancements and solidifying the region’s place in this transformative landscape.

How has Vertiv’s long-standing partnership with NVIDIA, especially in AI research, shaped its approach to supporting cutting-edge technology deployment?

Our partnership with NVIDIA has been pivotal, particularly in advancing critical digital infrastructure to accelerate AI adoption. Through power and cooling initiatives with NVIDIA, Vertiv has provided extensive expertise to show our ability to support global deployments at scale while educating and empowering the wider AI ecosystem.

Our unique blend of technical depth and global presence has established Vertiv as a trusted partner to industry giants who count on us for seamless, efficient deployment of their most advanced technologies.

How do you balance the increasing demand for computing power with the need for energy efficiency?

AI is often labelled as “energy-intensive,” but it’s more accurate to view it as a technology that enables higher productivity per kilowatt. Measured in Floating Point Operations Per Second (FLOPS) or Million Instructions Per Second (MIPS), today’s computers achieve exponentially higher outputs for the same energy input. While modern Graphics Processing Units (GPUs) indeed draw more power, their processing capabilities have advanced even faster, delivering greater efficiency in computational tasks.

Traditionally, Power Usage Effectiveness (PUE) has gauged efficiency by comparing power usage in compute tasks with total facility power. But PUE alone doesn’t fully capture the extraordinary gains in processing power achieved per energy unit. If we’d limited ourselves to Central Processing Unit (CPU) level performance, we’d save energy, but at the cost of breakthrough advancements in fields like medicine, scientific research, and sustainable energy solutions.

The tech industry is innovating vigorously for sustainability. UPS systems, which were once only 80% efficient, now achieve up to 98% or higher, while some cooling systems’ PUE has improved from 3-4 to approximately 1.2. This evolution underscores our commitment to balancing demand with sustainable infrastructure, driving positive impact even as we scale for a data-driven future.

How is Vertiv adapting its power and cooling solutions to meet the growing demand for higher power densities in data centers, especially with the integration of AI technologies, and what role do renewable energy sources play in this evolution?

Vertiv is actively partnering with leading tech developers to craft advanced cooling and power solutions that accommodate surging power densities in data centers. Just a few years ago, most data centers operated at about 10 kilowatts per cabinet. Today, some AI-focused hardware demands up to 40 kilowatts, and projections now reach from 80 to 150 kilowatts per rack. This rapid rise in power density requires pioneering approaches to power management and cooling, and Vertiv is spearheading this evolution from the grid down to the chip level.

AI workloads create fluctuating power demands that can impact grid stability. To address this, Vertiv collaborates with chip manufacturers and hyperscalers to develop technologies that stabilize power requirements while helping to maintain grid reliability. Our adaptive energy storage systems smooth out power fluctuations and help to seamlessly integrate renewables like wind and solar into the grid, promoting more efficient data center operations.

With a flexible, globally tailored approach to cooling and power, Vertiv ensures mission-critical infrastructure that supports a wide array of high-performance applications globally. This combination of advanced technology and regional customization positions Vertiv as a leader in future-ready data center solutions.

6. How is Vertiv empowering its global R&D network and partnerships to drive innovation in data center technology?

It’s an incredibly exciting era for Vertiv and the data center industry. Having witnessed several transformative waves, I believe we’re on the cusp of another pivotal shift. Vertiv’s commitment to cutting-edge R&D has never been stronger, with strategic partnerships at an all-time high positioning us alongside industry trailblazers. Major tech companies now look to Vertiv to create the future of data centers, engaging with top research institutions to advance capabilities at the frontier of the industry.

Vertiv’s extensive, strategically positioned R&D network allows us to swiftly adapt to evolving market needs, delivering region-specific solutions that set global standards. Through this expansive innovation ecosystem, we’re advancing the future of data centers and meeting the complex demands of the AI era with resilience and ingenuity.

Cover Story

The Shift to Unified Content Workflows Is Redefining Enterprise Media!

Walk into any modern content setup today, whether it’s a podcast studio, a corporate webinar room, or a hybrid event environment, and you’ll see a familiar pattern, one that reflects how fragmented the content production stack has become.

A microphone connected to an interface.

An interface connected to a laptop.

A laptop running multiple layers of software to mix, switch, stream, and record.

It works, but it’s rarely seamless.

Because the biggest challenge in content creation today isn’t access to tools, it’s understanding how they all fit together.

The Real Problem: Too Many Tools, Too Little Clarity

The rise of podcasting and video content has created a new kind of friction. Users are no longer asking what they can create; they are asking how to make the tools work together.

Recording audio separately, syncing video later, transferring large files to high-end machines, and relying on multiple software layers have become the default workflow. It works, but it is inefficient, expensive, and prone to failure.

The expanding ecosystem of devices, features, and formats has made even basic setup decisions unnecessarily complex.

When it comes to products from RØDE, users & creators already recognize the product’s potential to simply clarify and help elevate the overall workflow experience.

From Tools to Unified Systems

This is where the shift begins to stand out.

What we are seeing is not simply the addition of new features, but the consolidation of functions.

Mixer. Recorder. Audio interface. Video switcher. Stream encoder.

What traditionally required a stack of hardware and software is now being brought into a single console environment.

For creators, that simplifies production.

For enterprises, it changes how content infrastructure is designed.

As this shift gains momentum, it is also being acknowledged at a leadership level.

“Real innovation isn’t about adding more; it’s about removing friction and enhancing workflows.

Kalinda Atkinson,

With the introduction of platforms like the RØDECaster Video, we’re starting to see audio and video unified in one system, unlocking faster, more focused creative output.”

Global Marketing Director, RØDE

Why This Matters Beyond Creators

This shift is not limited to podcasters or streamers. Enterprises are increasingly building in-house content studios, executive communication channels, internal video platforms, and hybrid event capabilities as part of their broader communication strategy.

In these environments, complexity quickly becomes a bottleneck. Multiple tools often translate into longer setup times, increased points of failure, and a growing dependency on technical operators to manage what should ideally be straightforward workflows.

A unified system begins to reduce that friction, allowing teams to focus less on managing the process and more on the output itself.

The End of the Laptop-Centric Setup

One of the most significant changes is subtle: the laptop is no longer central.

With recording, streaming, and switching built directly into the console, content can now be produced without relying on external software or intermediary platforms. Audio and video routing happens natively within the system, removing the need to manage multiple layers of tools.

This, in turn, reduces reliance on tools like OBS Studio and lowers the need for high-performance machines in the production chain.

Broadcast Capabilities, Simplified

Features that were once limited to broadcast environments are now being integrated directly into compact systems. Capabilities such as multi-camera switching, ISO recording with separate tracks for each input, audio-based automatic switching between speakers, and network-driven video workflows like NDI are no longer confined to high-end production setups.

For enterprise teams, this translates into professional-grade production without the need for dedicated control rooms or complex broadcast infrastructure.

Modularity Signals Long-Term Thinking

Another important shift lies in how these systems evolve over time.

With expansion options such as adding video capabilities to existing audio consoles, RØDE is enabling a more modular approach to production. Instead of replacing entire systems, users can extend them based on their needs.

This becomes particularly relevant for organizations that may begin with audio-first content using consoles such as the RØDECaster Duo or RØDECaster Pro II, gradually expanding into video production with consoles such as RØDECaster Video, RØDECaster Video S, or even the RØDECaster Core, and scaling internal media capabilities over time. The result is a more flexible investment model that reduces upfront costs while supporting long-term growth.

A Shift in the Competitive Landscape

On the surface, this still appears to sit within the audio hardware category. In practice, however, it competes with something far broader.

As these systems begin to handle capture, processing, and output within a single environment, they start to overlap with production software ecosystems, video switching platforms, and content workflow tools.

The implication is clear: when orchestration happens within the system itself, the need for external layers begins to diminish.

The Opportunity Ahead

As the layers of complexity fade, creators will have more time for creative storytelling and less time worrying about the setup.

The new products and technology from RØDE not only remove setup barriers, but they also enable creators & enterprises to operate at a full professional standard, accelerating both the creativity and innovation ecosystems.

Srijith KN covers enterprise technology, media infrastructure, and digital transformation across the Middle East.

Cover Story

Cloud waste isn’t about Visibility it’s about Timing, says Atmoz CEO

“Cloud waste isn’t created by bad engineers. It’s created by systems that show problems too late. Once I saw that, it became clear, the solution wasn’t better reporting. It was prevention.” – Atmoz CEO Yael Shatzky

Yael Shatzky didn’t set out to build a company around cloud costs. What she noticed, after more than 25 years across enterprise technology, product marketing, and growth at organisations including Amdocs and Microsoft’s R&D ecosystem, was a pattern.

Not just rising cloud spend, but a deeper structural disconnect in how it’s managed.

If you were introducing yourself and Atmoz to someone outside tech, where would you begin?

I’d say I’m building a company that changes how people think about waste—specifically cloud and AI waste.

Imagine a house where electricity prices constantly change depending on what you use and when, but no one knows the cost. Lights stay on, AC runs all day, and while you know you’re wasting about 30%, you have no way to prevent it. The only signal you get is last month’s bill.

That’s how companies operate in the cloud today.

Atmoz changes that by bringing cost awareness into the moment decisions are made, helping teams make smarter choices without disrupting how they work. The result is simple: waste is prevented before it happens.

What is the core problem Atmoz is solving—and where has the market gone wrong?

The market has focused on visibility, dashboards and reports that explain what already happened.

But the problem isn’t visibility.

It’s timing.

By the time companies see the data, the money is already spent and systems are already in production. Even with perfect visibility, nothing changes.

Atmoz works at the moment engineers are building, engaging them with immediate, simple recommendations that don’t slow them down. That’s where prevention becomes possible.

What does ‘AI-first’ product development look like at Atmoz?

We built a data foundation that reconstructs cost signals as resources are created, before billing data exists. That’s the hard part.

On top of that, we use AI where it matters most: interaction and execution. Our AI agent takes accurate, contextual data and delivers actionable recommendations directly within developer workflows.

Because the system is grounded in precise data, the guidance isn’t just intelligent, it’s reliable and immediately usable.

What are the biggest challenges in getting engineers to trust AI-driven recommendations?

Interestingly, it’s not trust in AI, it’s the belief that prevention is even possible.

For years, companies have been told they can reduce costs, yet around 30% of cloud spend is still wasted. That’s because most tools analyse waste after it happens, they don’t stop it.

Once engineers see an issue flagged in real time, with clear context and a simple fix, the skepticism disappears. It becomes tangible.

What is one leadership mistake that fundamentally changed how you operate?

Focusing too much on the product, and not enough on marketing early on.

Great products don’t speak for themselves, especially when you’re creating a new category. Marketing isn’t something you layer on later; it shapes how the product is understood and adopted. Starting early makes a significant difference.

Where do you see the biggest inefficiencies today?

The biggest inefficiency is the disconnect between engineering decisions and their financial impact.

Every time a developer deploys infrastructure or triggers an AI workload, they’re making a financial decision, without visibility into its cost implications.

AI is amplifying this. Costs are more volatile, and traditional feedback loops can’t keep up.

Atmoz brings cost awareness into that decision point, making efficiency part of the engineering discipline, much like security became over time.

At this stage, how do you define success?

Success isn’t a single milestone, it’s a series of moments.

Signing a new customer. Launching a capability that impacts spend. Getting a call from a customer excited because they just saved $30K on something they didn’t even know was happening.

Those moments are what drive us forward.

You’re defining a new category. What does it take to change long-held assumptions?

It starts with conviction. You’re asking people to question something they’ve accepted as normal.

But conviction alone isn’t enough, proof is everything. Category change happens when someone sees it working in their own environment and has that “aha” moment.

That’s why we focus on immediate, tangible value. When waste is prevented in real time, the mindset shift follows naturally.

Resilience also matters. When you challenge established models, you will be dismissed. The key is to stay grounded in the problem and keep showing evidence.

Has the industry been solving cloud waste the wrong way? Why hasn’t it changed?

I wouldn’t say wrong, FinOps tools solved the problem they were designed for. They brought visibility and governance, which was critical.

But they were built on the assumption that cost is something you analyse after it happens.

Today, cost is created instantly, when infrastructure is provisioned or AI workloads run. But feedback still comes later. That gap is the issue.

What’s changed is the pace of engineering. With AI, decisions are faster and costs are more dynamic. What used to be inefficient is now unsustainable.

That’s why prevention isn’t just an improvement, it’s becoming essential.

How will engineering teams work differently in five years?

Cost will no longer be treated as something external, owned by finance. It will become part of the engineering feedback loop, like performance or reliability.

Atmoz brings that awareness into everyday workflows, guiding better decisions without adding friction.

Over time, this shifts behaviour. Waste isn’t something you detect and fix later, it simply doesn’t get created.

The result is not just lower cost, but faster teams, better decisions, and more room to innovate.

Cover Story

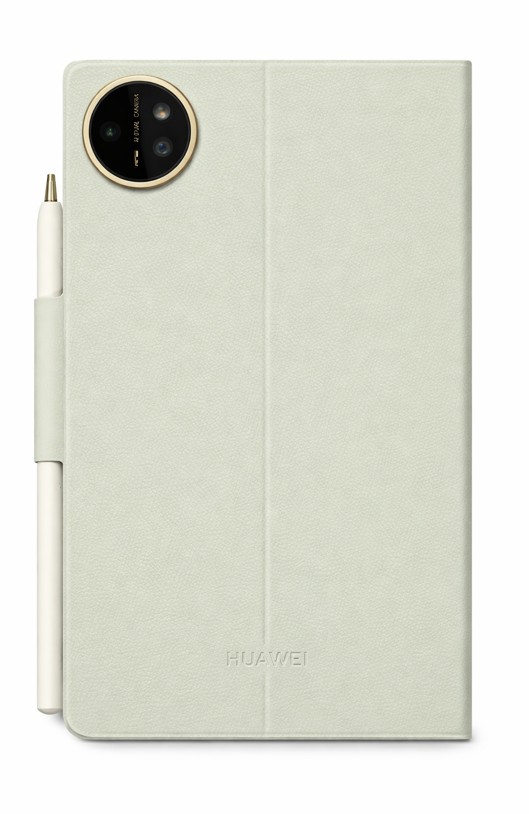

Huawei MatePad Mini: A Tablet That Feels Like a Real Notebook

Huawei’s compact tablet feels less like a gadget and more like a thoughtfully designed digital notebook, blending portability with everyday productivity.

I have been using Huawei’s MatePad 11.5 S for a while now for writing, editing, and most of my day-to-day journalistic work. It has turned out to be a surprisingly capable productivity device. So, when the MatePad Mini arrived, I was curious to see how Huawei would translate that experience into a much smaller form factor.

Reviewed By: Srijith KN, Senior Editor, Integrator

Design and Accessories

The first thing that stood out during the unboxing was not just the device, its accessories! Huawei has clearly put thought into the overall experience. The tablet ships with well-designed cases, including a transparent option and a diary-style booklet cover.

The diary cover, in particular, immediately felt right to me. It makes the tablet feel less like a gadget and more like a compact notebook you would carry every day. There is a certain familiarity to it, almost like picking up a journal rather than a device.

Huawei also continues to include a charger in the box, and this one comes with a 66W unit, a thoughtful touch at a time when many brands have moved away from bundling one altogether.

Everyday Portability

The 8.8 inch tablet immediately feels comfortable in the hand. It is extremely light and compact, measuring just 5.1 mm thick and weighing around 255 grams. That portability is noticeable right away.

In many ways, it feels closer to carrying a paperback than a traditional tablet. I currently use the Nothing Phone 3 as my daily device, and at times even that feels heavier than this. The MatePad Mini, on the other hand, almost disappears in your hands.

Huawei is also using a magnesium alloy body here, which keeps the device light without compromising on rigidity. Given how thin it is, that added structural strength feels reassuring.

A Paper Like Experience That Works

Last night, I found myself reading long articles on it for hours without feeling any strain. That is where the device really begins to make sense.

It genuinely feels like a digital paper booklet, built for reading, note-taking, writing, or quickly catching up on work while on the move. The green variant, in particular, features Huawei’s PaperMatte display, and it is easily one of the most distinctive aspects of this device.

Huawei claims the display reduces up to 99 percent of ambient light interference, and in real-world use, that translates into a noticeably glare-free experience. Even under indoor lighting, reflections are minimal, and the screen remains comfortable to look at for extended periods.

At the same time, it does not compromise on performance. With up to 1800 nits of brightness, a 120Hz refresh rate, and a wide color gamut, the display manages to balance readability with visual richness, something that is not easy to get right in smaller devices.

There is also an eBook mode that shifts the display into a black and white, paper like view, along with other settings designed to reduce eye strain during longer reading sessions. Additional options like eye comfort and sleep mode further support extended use.

Writing and Creativity

I also spent some time using the M Pencil for quick notes, and the experience feels surprisingly close to paper. Coming from the MatePad 11.5 S, Huawei continues to deliver one of the better stylus experiences in this space.

The M Pencil Pro adds more depth to the experience than expected. With different tip options and subtle haptic feedback, writing feels more tactile and intentional, rather than just tapping on glass.

Paired with the updated Huawei Notes app, the experience becomes more refined. Features like handwriting enhancement subtly improve legibility without taking away the personal feel of your writing, making it especially useful for quick notes and longer-form thinking.

Hardware and Performance

The MatePad Mini packs a 6400 mAh battery with support for fast charging, capable of going from zero to full in about an hour. On paper, it looks promising, though I will reserve judgment until I have spent more time with it.

On the hardware side, it includes a 50MP rear camera and a 32MP front camera, along with stereo speakers, Wi-Fi 7, USB-C 3.0, and a fingerprint sensor, something I wish Huawei had included on the MatePad 11.5 S as well.

Editor’s Perspective

Whenever I am seen using a Huawei device, the first question that comes up from people around me is usually about the ecosystem, particularly about Google services.

I too had similar concerns earlier, but having used Huawei devices for a while now, the experience has been smoother than expected. HarmonyOS feels clean and fluid, and tools like GBox make it possible to access most essential apps. Even for someone deeply tied to Google services, it has been more manageable than I initially thought.

What becomes clearer over time is that this is not just a smaller tablet. It sits somewhere between an eBook reader and a productivity device, built for focused, everyday use.

The MatePad Mini does not feel like Huawei shrinking a tablet. It feels like a refinement of how a compact device should actually be used. Its notebook-like form, paper-inspired display, and practical accessories make it easy to carry, pick up, and use throughout the day.

It is still early days, but the first impressions are strong. In a crowded tablet market, this feels like one of the more purposeful and interesting form-factor than the other compacts that we have seen in a while.

-

News11 years ago

SENDQUICK (TALARIAX) INTRODUCES SQOOPE – THE BREAKTHROUGH IN MOBILE MESSAGING

-

Tech News2 years ago

Tech News2 years agoDenodo Bolsters Executive Team by Hiring Christophe Culine as its Chief Revenue Officer

-

Trending7 months ago

Trending7 months agoOPPO A6 Pro 5G Review: Reliable Daily Driver

-

VAR1 year ago

VAR1 year agoMicrosoft Launches New Surface Copilot+ PCs for Business

-

Tech Interviews2 years ago

Navigating the Cybersecurity Landscape in Hybrid Work Environments

-

Automotive2 years ago

Automotive2 years agoAGMC Launches the RIDDARA RD6 High Performance Fully Electric 4×4 Pickup

-

Tech News10 months ago

Tech News10 months agoNothing Launches flagship Nothing Phone (3) and Headphone (1) in theme with the Iconic Museum of the Future in Dubai

-

VAR2 years ago

VAR2 years agoSamsung Galaxy Z Fold6 vs Google Pixel 9 Pro Fold: Clash Of The Folding Phenoms